GIRAFFE: Representing Scenes as Compositional Generative Neural Feature Fields

2021

Conference Paper

avg

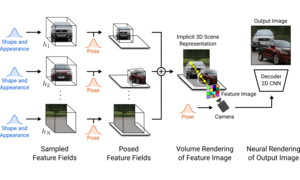

Deep generative models allow for photorealistic image synthesis at high resolutions. But for many applications, this is not enough: content creation also needs to be controllable. While several recent works investigate how to disentangle underlying factors of variation in the data, most of them operate in 2D and hence ignore that our world is three-dimensional. Further, only few works consider the compositional nature of scenes. Our key hypothesis is that incorporating a compositional 3D scene representation into the generative model leads to more controllable image synthesis. Representing scenes as compositional generative neural feature fields allows us to disentangle one or multiple objects from the background as well as individual objects' shapes and appearances while learning from unstructured and unposed image collections without any additional supervision. Combining this scene representation with a neural rendering pipeline yields a fast and realistic image synthesis model. As evidenced by our experiments, our model is able to disentangle individual objects and allows for translating and rotating them in the scene as well as changing the camera pose.

| Author(s): | Michael Niemeyer and Andreas Geiger |

| Book Title: | Conference on Computer Vision and Pattern Recognition (CVPR) |

| Year: | 2021 |

| Department(s): | Autonomous Vision |

| Bibtex Type: | Conference Paper (inproceedings) |

| Links: |

pdf

suppmat video Project Page |

| Video: | |

|

BibTex @inproceedings{Niemeyer2021CVPR,

title = {GIRAFFE: Representing Scenes as Compositional Generative Neural Feature Fields},

author = {Niemeyer, Michael and Geiger, Andreas},

booktitle = {Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2021},

doi = {}

}

|

|