Semantic Instance Annotation of Street Scenes by 3D to 2D Label Transfer

2016

Conference Paper

avg

ps

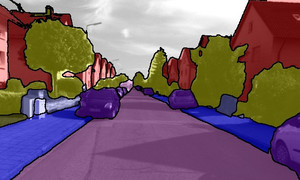

Semantic annotations are vital for training models for object recognition, semantic segmentation or scene understanding. Unfortunately, pixelwise annotation of images at very large scale is labor-intensive and only little labeled data is available, particularly at instance level and for street scenes. In this paper, we propose to tackle this problem by lifting the semantic instance labeling task from 2D into 3D. Given reconstructions from stereo or laser data, we annotate static 3D scene elements with rough bounding primitives and develop a probabilistic model which transfers this information into the image domain. We leverage our method to obtain 2D labels for a novel suburban video dataset which we have collected, resulting in 400k semantic and instance image annotations. A comparison of our method to state-of-the-art label transfer baselines reveals that 3D information enables more efficient annotation while at the same time resulting in improved accuracy and time-coherent labels.

| Author(s): | Jun Xie and Martin Kiefel and Ming-Ting Sun and Andreas Geiger |

| Book Title: | IEEE Conf. on Computer Vision and Pattern Recognition (CVPR) |

| Pages: | 3688-3697 |

| Year: | 2016 |

| Month: | June |

| Department(s): | Autonomous Vision, Perceiving Systems |

| Research Project(s): |

Image Segmentation and Semantics

Global Localization and Affordance Learning |

| Bibtex Type: | Conference Paper (inproceedings) |

| Paper Type: | Conference |

| Event Name: | IEEE International Conference on Computer Vision and Pattern Recognition (CVPR) 2016 |

| State: | Published |

| Links: |

pdf

suppmat Project Page |

|

BibTex @inproceedings{Xie2016CVPR,

title = {Semantic Instance Annotation of Street Scenes by {3D} to {2D} Label Transfer},

author = {Xie, Jun and Kiefel, Martin and Sun, Ming-Ting and Geiger, Andreas},

booktitle = { IEEE Conf. on Computer Vision and Pattern Recognition (CVPR)},

pages = {3688-3697},

month = jun,

year = {2016},

doi = {},

month_numeric = {6}

}

|

|